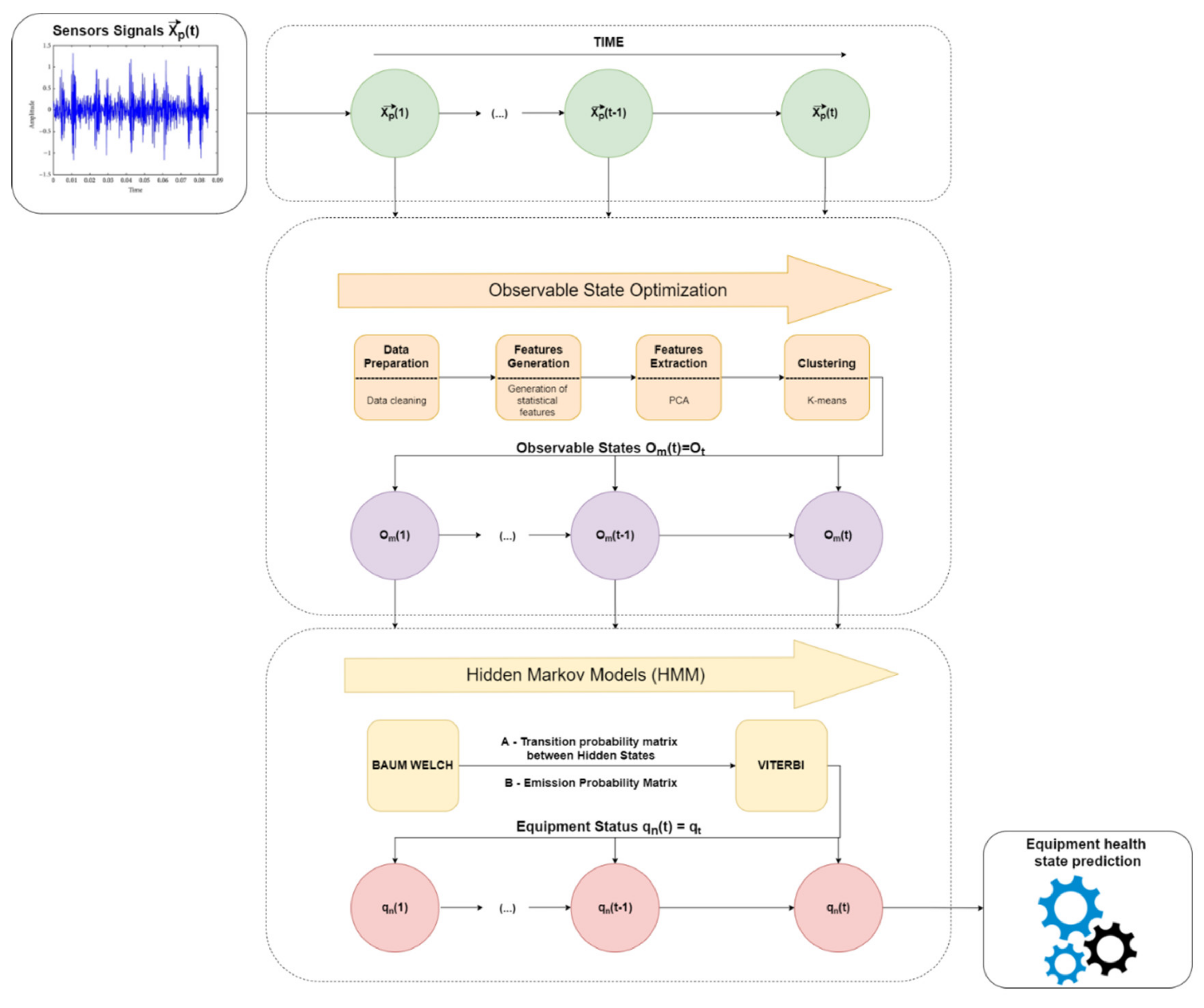

Bilmes, “A gentle tutorial of the EM algorithm and its application Rabiner “A tutorial on hidden Markov models and selectedĪpplications in speech recognition”, Proceedings of the IEEE 77.2, The last one can be solved by an iterative Expectation-Maximization (EM)Īlgorithm, known as the Baum-Welch algorithm. The Viterbi algorithm and the Forward-Backward algorithm, respectively.

The first and the second problem can be solved by the dynamic programming Given just the observed data, estimate the model parameters. Given the model parameters and observed data, calculate the model likelihood. Given the model parameters and observed data, estimate the optimal There are three fundamental problems for HMMs: The HMM is a generative probabilistic model, in which a sequence of observable

Hmmlearn implements the Hidden Markov Models (HMMs).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed